Examining procurement “red flags” in Latin America with data

For anyone interested in exploring how open data might help make public contracting more honest and efficient, Latin America is a great place to start. The region is home to almost half of the world’s OCDS publishers and some of the largest and most complete contracting datasets. And crucially, data quality is now high enough in several jurisdictions to be used for improving public procurement outcomes.

It’s impossible to manually track and monitor hundreds of thousands of contracting processes. Publicly-accessible open data can make the task manageable, more reliable and credible, when they’re used to develop machine analytics to detect contracting processes that require further human investigation. So early in June, procurement practitioners from across the region gathered in Santiago for a workshop on “red flags”, risk indicators that can help to identify potential wrongdoing or inefficiencies in contracting processes, such as:

- short tender periods

- low number of bidders

- low percentage of contracts awarded competitively

- high percentage of contracts with amendments

- large discrepancies between award value and final contract amount.

The participants—who came from government, oversight authorities, civil society and media in seven countries (Argentina, Colombia, Peru, Honduras, Chile, Paraguay and Mexico)—shared approaches and tools to identify risks in procurement that affect public integrity and efficiency, and explored how to use Open Contracting Data Standard (OCDS) data to build reliable, low-cost red flag models, with tangible examples from several countries.

Ya en Chile en el evento de Banderas Rojas, @K_Wikrent nos da la introducción a @ocdata y cómo usarlos para el cálculo y detección de éstas banderas pic.twitter.com/80I2bS7T2n

— Yohanna Lisnichuk (@YohaLisnichuk) June 4, 2019

Building & testing simple red flag tools

In a hands-on data expedition adapted from the School of Data’s methodology, each group calculated meaningful red flags by exploring the OCDS data available from their own jurisdiction. They chose which red flag they wished to analyze and applied their mini-model to a small sample of data, which we had prepared for them in advance. These are the red flags they selected and how far they managed to get in half day:

- Buenos Aires, indicator: competitive procurement

Original OCDS databaseThe city government representative analyzed the percentage of competitively-awarded processes awarded. According to the city’s procurement legislation, goods and services should in general be procured using open tenders to promote free competition and transparency. This indicator helps to detect processes tailored to particular bidders or poor planning by the government’s different purchasing units. The city’s procurement director, Marisa Tojo, who analyzed the data, managed to calculate it as a share of procurement processes. She also analyzed the value of competitively-awarded contracts compared to the total contracted amount.

- Chile, indicators: tender periods, bidders per tender

Original OCDS databaseThe Chilean team analyzed several red flags through two complementary approaches: the government’sObservatorio ChileCompra and the civil society-run model proposed by Observatorio del Gasto Fiscal. The red flags that provided most insights to the whole group were abnormally short tender periods and the average number of tenderers per procurement process. Tender periods led to a good group discussion about how the internal databases were mapped to OCDS. The average number of bidders per tender varied significantly across procurement methods and tender values: from the small sample, public works tenders had five times fewer bidders than other open tenders. Guillermo Burr from ChileCompra highlighted how OCDS can help benchmark and compare data across countries.

- Colombia, indicators: amendments & changes to contract values

Original OCDS databaseRepresentatives from the Colombian General Comptroller’s Office and the local chapter of the anti-corruption watchdog Transparency International worked with a Peruvian journalist from Ojo Público to explore what happens to contracts after they are signed. They focused on analyzing the share of contracts with amendments and the difference between awarded value and final contract value. Their calculations seemed to indicate that almost half of the processes had amendments, a higher fraction than expected, which led them to question if that reflected the reality. They identified one problem: apparently, any document published after the contract was signed, such as supervision reports, might be counted as amendments. We have informed the procurement agency Colombia Compra Eficiente to check that. When the team tried to calculate the second indicator, discrepancies between award and final contract amounts, they found that all the processes in the sample seemed to have the exact same value when awarded and when finalized. As this would rarely be the case, they reviewed specific processes and found that there might be a problem with how the final contract value is being reported by officers and captured in the procurement platforms.

- Honduras, indicator: tender periods

Original OCDS databaseDavid Luna from Honduras’s ONCAE, the national contracting and procurement office, decided to assess whether the timeframe to accept bids for any tenders were unreasonably short. This would help detect the processes that had potentially been directed to specific suppliers. He argued that low competition could allow incumbent suppliers to establish an oligopolistic market in which they control the supply and prices. About 80% of the Honduran data contained information about the tender period. But only 8% included the procurement method, which is important to evaluate bidding behavior in open tenders.

- Mexico, indicators: tender periods & values

Original OCDS databaseTwo groups chose to work with the Mexican federal-level data. The first group looked at the tender period. By law, national open tenders should have a tender period of at least 15 days while international ones should be open for at least 20 days. The sample data didn’t contain any process that didn’t follow that rule. But the analysts highlighted that Compranet, Mexico’s federal procurement platform is only used in 36% of the competitive processes.The second group found that most tender values in the sample are registered as 0 and only 8% corresponded to open procedures. Something to check in the complete dataset!

- Paraguay, indicator: values across the procurement cycle

Original OCDS databaseThe Paraguayan team followed the value through the different stages of the procurement process: from budget planning, through tender and award, to contract. This is useful to see if planning is working correctly and to identify possible wrongdoing. They had stringent thresholds and checked for processes which had any of the following characteristics: more than 5% difference between awarded and tender value; more than 10% difference between tender and awarded value; and any difference, even if slight, between awarded and contract value. They detected municipal public works processes where the awarded value was different from the contract value.

A quick note on our methodology

Each group’s dataset consisted of a sample of 500 procurement processes (OCDS releases) from 2018, which were selected from the (often huge amounts) of data available in each jurisdiction. We used the OCDS flatten tool to convert each JSON file into two tabular files: one with field names in Spanish and the other in English. We also provided coverage tables that informed the users which fields were present in each dataset, and the share of procurement processes included that information.

The teams consisted of a policy expert, data curator, data analyst, and a data storyteller, which leveraged the skills of our talented partners to analyze, design, develop, and implement mini-models. Our practitioners selected their preferred analysis tool to calculate the red flags, ranging from Excel spreadsheets to scripts in the programming language R or even Open Refine. It was encouraging to see how these flags can be calculated in practice, regardless of the technical level of the person performing the analysis.

The data expeditions took place on the second day of the workshop after we had introduced participants to red flag models used elsewhere (see OCP’s own work on red flags). Anastasiya Kozlovtseva shared TI Ukraine’s model, which analyzes 40 risk indicators supported by machine learning from the citizen-reporting platform Dozorro. As of June, it is used by almost a million users and has identified more than 200,000 violations to contracting rules. Anastasiya also explained their “Tinder for Tender” tool, a clever application of the model, which was used internally by procurement experts to classify suspicious contracting processes.

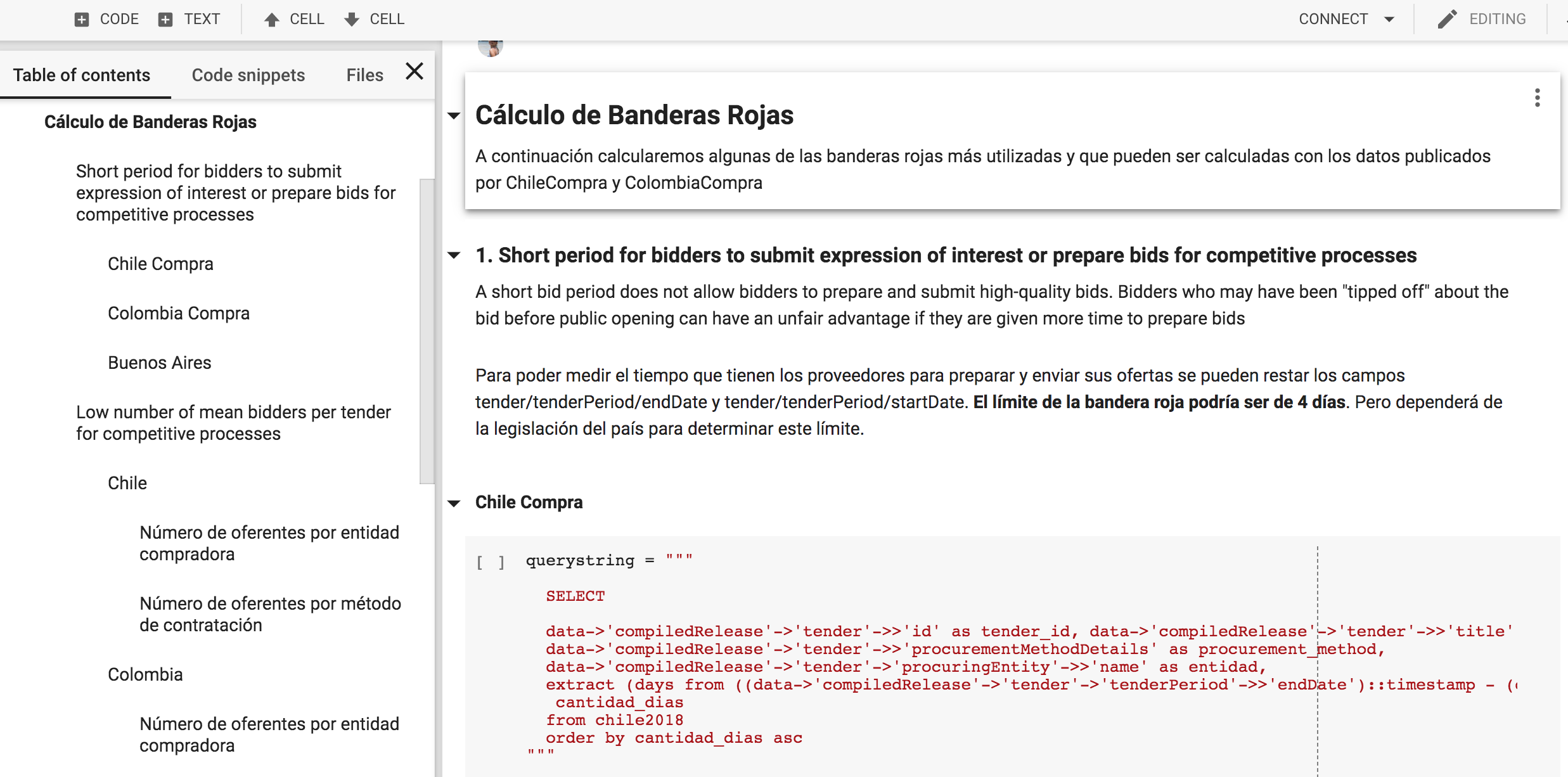

We showed how five of the most commonly used red flags can be calculated, using a Google collaboratory notebook and data from Colombia Compra Eficiente and ChileCompra, which we gathered with the OCDS Kingfisher tool. A great discussion started as we went through the results, gave context to the numbers and indicator thresholds, and identified similarities between the countries. What could be an indication of a risky process in one country, could be normal in another jurisdiction, while it may be outright banned elsewhere.

The idea for this workshop emerged from our collaboration with Chilean civil society partners Observatorio del Gasto Fiscal and Chile Transparente, who have pushed from within ChileCompra’s civil society council (COSOC) for the use of open data for increased integrity. The government procurement agency ChileCompra, has also been supportive of the initiative, seeing the potential in having civil society lead the development of such a model.

What next for red flags in Latin America?

One of the big takeaways was how standardized data can streamline efforts from different places and share models and knowledge more effectively across countries wanting to monitor procurement indicators and identify red flags.

Going through the data also helped identify possible mapping issues and quality problems: when looking at tender periods, some agencies in Colombia apparently open their tender to receive bids for less than one day, signaling a probable issue with how the data is being captured in the procurement platforms.

Some other participants realized that data wasn’t available to calculate some of the red flags that were relevant for them, especially those related to the implementation stage, which is seldom published. All of these aspects will help inform improvements to data validation that should lead to better quality data.

Against the backdrop of the gorgeous snow-capped Andes, we closed the workshop with participants making concrete commitments for further developments: Pablo Seitz, the director of Paraguay’s public contracting agency (DNCP) agreed to publish at least three red flags on their website for public monitoring; Mexican and Chilean representatives committed to form working groups to advance this subject in their countries, and Colombia pledged to use OCDS data for fiscal audit means. Buenos Aires and Honduras promised to build on their recent OCDS data publication to start setting up tools such as the ones discussed.

We believe this could be the starting point to better track what’s happening through efficient red flag models in Latin America that can serve to transform public contracting. Our helpdesk is ready to answer all your questions, so don’t be shy and tell us what you think!